Breaking News

Popular News

Enter your email address below and subscribe to our newsletter

Trump bans Anthropic from all federal government use and the Pentagon declares the AI company a “supply chain risk” — the same designation reserved for China’s Huawei — after Anthropic CEO Dario Amodei refused to allow the US military to use its AI for mass domestic surveillance of American citizens or fully autonomous weapons that kill without human approval. Hours later, OpenAI swooped in and signed a Pentagon contract — but with the same guardrails Anthropic had demanded all along.

Key Takeaways:

- Trump ordered every federal agency to immediately stop using Anthropic’s AI tools on Friday, February 27, 2026.

- Defense Secretary Pete Hegseth declared Anthropic a “supply chain risk” — a designation normally used for Chinese military companies like Huawei — after Anthropic refused to remove two safety restrictions from its AI: no mass domestic surveillance and no fully autonomous kill decisions.

- Anthropic was the first and, until this week, the only AI company cleared to operate in the Pentagon’s classified networks. Claude was already being used for intelligence analysis, operational planning, and cyber operations — including reportedly the US capture of Venezuelan president Nicolás Maduro last month.

- Within hours of the ban, OpenAI CEO Sam Altman announced a deal with the Pentagon — but explicitly included the same two restrictions Anthropic had demanded: no domestic mass surveillance, human responsibility for use of force.

- Anthropic says it will challenge the “supply chain risk” designation in court, calling it “legally unsound.”

- Employees at both Google and OpenAI publicly supported Anthropic in an open letter — a rare cross-company rebuke of the administration.

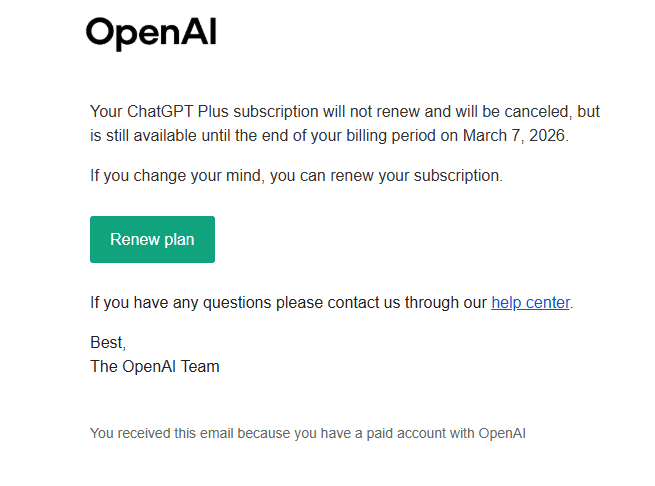

- The mass OpenAI subscription cancellations — including by the author — have already begun.

For months, the Pentagon had been pressuring Anthropic to sign a contract that permitted the US military to use its AI model, Claude, for any lawful purpose — a seemingly innocuous phrase that concealed two deeply specific demands: the ability to conduct mass domestic surveillance of American citizens, and the ability to deploy fully autonomous weapons systems that select and engage targets without any human making the final call.

Anthropic — which was the first and until this week the only AI company approved for use in the Pentagon’s classified networks — said no. It had said no for months. And on Thursday, February 26, 2026, CEO Dario Amodei published a full public statement explaining why the company “cannot in good conscience” comply, even as Secretary of Defense Pete Hegseth gave them until Friday evening to capitulate or face consequences.

Anthropic didn’t capitulate. On Friday, February 27, President Trump ordered all federal agencies to immediately stop using Anthropic’s products. Hegseth followed by formally designating Anthropic a “supply chain risk to national security” — a designation that, as even the Pentagon’s own AI policymakers noted with alarm, is normally reserved for foreign adversaries. Applying it to an American company was, by any measure, extraordinary. The designation forces every military contractor in the country to prove their Pentagon work has no contact with Anthropic’s technology — effectively a blacklist of a US company for refusing to enable domestic spying.

This is the same administration that has designated accountability as the enemy. The same pattern. A company says no. The government punishes them. Not because they’re wrong. Because they said no.

It’s worth being precise about what Anthropic actually refused — because the Trump administration’s framing of this as “woke AI” is a deliberate distortion designed to make safety guardrails sound like political ideology.

In his statement, Amodei was explicit. Anthropic supports military use of AI. Claude was already being used for intelligence analysis, operational planning, cyber operations, and other mission-critical tasks. Anthropic forewent hundreds of millions in revenue to cut off Chinese military-linked firms from accessing Claude. They called for chip export controls to protect America’s AI lead. They were, by their own description and by the government’s prior conduct, deeply cooperative military partners.

The two things they refused:

1. Mass domestic surveillance of American citizens. Amodei wrote: “Using these systems for mass domestic surveillance is incompatible with democratic values. AI-driven mass surveillance presents serious, novel risks to our fundamental liberties.” He noted that under current law, the government can already purchase detailed records of Americans’ movements, web browsing, and social associations from commercial data brokers without a warrant — because the law hasn’t caught up to the technology. Powerful AI makes it possible to assemble all that scattered data into a comprehensive picture of any person’s life automatically and at massive scale. Anthropic wasn’t willing to be the engine for that.

2. Fully autonomous weapons. Amodei was careful to distinguish: partially autonomous weapons, like the drone systems used in Ukraine, were fine. The line was fully autonomous weapons — those where AI selects and engages targets with no human in the loop at all. His argument was not philosophical. It was technical: “Frontier AI systems are simply not reliable enough to power fully autonomous weapons.” Deploying unreliable AI in life-or-death targeting decisions puts American warfighters and civilians at risk. Anthropic offered to do R&D with the Pentagon to improve that reliability. The Pentagon declined.

The pattern of regulatory capture is identical to what happened with financial deregulation: the government removes the guardrails, insists everything will be fine, and then the catastrophic failure happens to ordinary people who had no say in the decision.

Here’s where the story gets both absurd and revealing. Within hours of Trump banning Anthropic, OpenAI CEO Sam Altman posted a statement announcing his company had signed a deal with the Pentagon — but then listed, almost word for word, the same restrictions Anthropic had demanded.

“Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems,” Altman wrote. “The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement.”

Pete Hegseth, who had just declared Anthropic a national security threat for holding this exact position, re-posted Altman’s announcement. The Pentagon’s Under Secretary for technology Emil Michael posted that having “a reliable and steady partner that engages in good faith makes all the difference.”

Read that again. The Pentagon punished Anthropic for insisting on no domestic surveillance and no autonomous kill decisions. Then it signed a deal with OpenAI that includes no domestic surveillance and no autonomous kill decisions. And called OpenAI a “good faith partner.” CNN confirmed it could not identify what meaningfully distinguished the two deals. OpenAI itself has not clarified what it agreed to that Anthropic didn’t.

The most plausible explanation isn’t policy. It’s politics. Elon Musk — who heads DOGE, advises the White House, and owns xAI (which had already secured its own Pentagon classified-network deal earlier that week) — has been a direct competitor of both Anthropic and OpenAI. Anthropic’s CEO Dario Amodei has a documented history of opposing Trump administration priorities. Hegseth has publicly called Anthropic’s position “woke AI.” The attack on Anthropic looks far less like a principled national security decision and far more like an ideologically motivated punishment for not being sufficiently aligned with this administration’s political interests.

The Anthropic case doesn’t exist in isolation. It’s part of a documented, escalating pattern of this administration using government contracts, legal designations, and regulatory power as punishment tools against entities — companies, law firms, universities, nonprofits, media organizations — that decline to comply with its demands.

Law firms that represented clients Trump didn’t like have had their government contracts threatened or cancelled. Universities that didn’t comply with DEI policy demands had federal funding frozen. Media organizations that published unfavorable coverage faced pressure through regulatory bodies and government advertising budgets. Now an AI company that said “we won’t spy on American citizens” has been labeled a threat equivalent to Huawei.

This is what unchecked executive power looks like when it meets a sector that hasn’t been fully captured yet. The AI industry is one of the few remaining areas of the US economy with concentrated private power capable of pushing back against government demands. What happens here — whether Anthropic wins its lawsuit, whether OpenAI’s deal holds, whether other AI companies fold — will set the template for how far this administration can go in commandeering private technology for domestic political purposes.

The Electronic Frontier Foundation said it plainly: “Tech companies shouldn’t be bullied into doing surveillance.” They shouldn’t. But they are being bullied. And whether the AI industry, as a whole, has the spine to hold that line is one of the defining questions of 2026.

Meanwhile, Elon Musk’s xAI — which operates without the public safety disclosures Anthropic published — already secured its own Pentagon classified-network deal earlier this week. No controversy. No public statement. No guardrails debate. The administration didn’t demand xAI justify its safety protocols. It didn’t need to. xAI is politically aligned.

Here’s the part that should concern anyone who isn’t a defense contractor or a White House adviser. This fight isn’t about corporate competition between AI companies. The two restrictions Anthropic refused to drop — no mass domestic surveillance, no autonomous kill decisions without human approval — are protections that exist, to the extent they exist at all, specifically to protect you.

The government doesn’t need a warrant to buy your location history from data brokers. It doesn’t need a warrant to buy your web browsing history, your app usage data, your social graph. The Fourth Amendment, as currently interpreted, has not kept pace with what commercial data collection makes possible. The only thing standing between the government and an AI-powered comprehensive surveillance system of every American’s movements, associations, and communications is the companies that build the AI refusing to be the instrument of that system.

Anthropic refused. And they were punished for it with the same designation used for Chinese military companies. What happens when every AI company that wants government contracts learns that lesson?

The mass consumer backlash — people cancelling OpenAI subscriptions in solidarity with Anthropic, open letters from Google and OpenAI employees supporting Anthropic’s stand — suggests that the public understands the stakes even if the political class doesn’t. Employees at Google and OpenAI signed a joint open letter supporting Anthropic’s position. Tech workers who have to live under the same government systems they’re building are drawing a line. That matters.

The irony noted by multiple legal observers is crystalline: the Pentagon simultaneously argued that Anthropic was a “supply chain risk” and that Claude was essential to active military operations. If Anthropic is a national security threat, why was its AI being used last month to help capture a foreign head of state? The logical incoherence of the designation is the point — it’s a punishment tool, not a legal determination.

Why did Trump ban Anthropic?

President Trump ordered all federal agencies to stop using Anthropic’s AI products after the company refused to allow the Pentagon to use its AI model Claude for mass domestic surveillance of American citizens and fully autonomous weapons systems that operate without human approval. Defense Secretary Pete Hegseth then formally designated Anthropic a “supply chain risk to national security” — a designation normally reserved for foreign adversaries like China’s Huawei.

What did OpenAI agree to that Anthropic didn’t?

That remains unclear. OpenAI CEO Sam Altman’s announcement explicitly included the same two restrictions Anthropic had demanded: no domestic mass surveillance and human responsibility for the use of force. CNN and other outlets noted they could not identify what meaningfully distinguished the two deals. The Pentagon has not clarified. The most likely explanation is political alignment, not substantive policy difference.

What is Anthropic doing now?

Anthropic has announced it will challenge the “supply chain risk” designation in court, calling it “legally unsound.” The company has also stated it will work to enable a smooth transition for any ongoing Pentagon operations that used Claude, to avoid disrupting active military missions.

Why does this matter to people who don’t use AI?

The two restrictions Anthropic refused to remove — no mass domestic surveillance, no autonomous kill decisions — are protections for ordinary Americans’ civil liberties. Under current law, the government can purchase detailed records of Americans’ movements and online activity without a warrant. AI systems powerful enough to assemble that data into comprehensive profiles of every American’s life already exist. Whether companies are willing to be the instrument of that system — or refuse, as Anthropic did — is one of the most consequential civil liberties fights of the decade.

Primary sources for this article: Dario Amodei’s full statement, Anthropic (Feb 26, 2026); CNN, “OpenAI strikes deal with Pentagon hours after Trump admin bans Anthropic” (Feb 27, 2026); The Guardian, “Anthropic says it ‘cannot in good conscience’ allow Pentagon to remove AI checks” (Feb 26, 2026); BBC News, “Trump orders government to stop using Anthropic in battle over AI use” (Feb 27, 2026); ABC News, “Trump orders US government to cut ties with Anthropic” (Feb 27, 2026); NPR, “Hegseth threatens to blacklist Anthropic over ‘woke AI’ concerns” (Feb 24, 2026); Electronic Frontier Foundation, “Tech Companies Shouldn’t Be Bullied Into Doing Surveillance” (Feb 2026); DefenseScoop, “Experts raise questions and concerns about Pentagon’s threat to designate Anthropic a supply chain risk” (Feb 27, 2026); Sam Altman public statement on X (Feb 27, 2026); Yahoo News/Reuters, “Employees at Google and OpenAI support Anthropic’s Pentagon stand in open letter” (Feb 27, 2026).